Artificial Intelligence has completely changed the way modern AI SaaS products are designed, built, and scaled. From AI copilots and document intelligence to autonomous workflows and predictive analytics, AI-native platforms have become the new standard for enterprise software.

However, building a successful AI SaaS platform from scratch is very different from integrating a chatbot into an existing application. You need to think about AI architecture, scalable infrastructure, data strategy, operational reliability, cost optimisation, strong product design and user experience and get them right from the start.

This guide walks you through the practical technical roadmap to build an AI SaaS product from scratch — from ideation to production-scale deployment.

The approach describes how modern cloud-native and AI-first engineering practices are commonly used in scalable SaaS platforms and enterprise AI systems.

1. Start with the Right AI Problem

Most AI products fail before a single line of code is written.

The first step should not be selecting a fancy LLM or vector database. It is to find a real workflow where AI makes a clear difference.

Good AI SaaS opportunities typically involve:

- Repetitive manual workflows

- Tons of unstructured data

- Decision support

- Content creation and generation

- Search and retrieval problems

- Process automation

- Operational intelligence

- Human-in-the-loop review systems

Examples include:

| Industry | AI SaaS Opportunity |

| Legal | Contract analysis and clause extraction |

| Finance | Invoice processing and reconciliation |

| Healthcare | Medical summarisation and documentation |

| Real Estate | Property intelligence and tenant workflows |

| Sales | AI-driven CRM automation |

| Customer Support | AI copilots and automated responses |

| Logistics | Intelligent order processing |

The most successful AI SaaS products solve real business bottlenecks in actual workflows.

2. Define the SaaS Business Model Early

Before writing code, first you need to be clear on the basics.

Questions to answer:

- Is this SaaS genuinely multi-tenant?

- Will customers upload their own data?

- Is AI meant to work in real time or not?

- Does the product require role-based access?

- Will the platform support white-labelling?

- What are the compliance needs (GDPR, HIPAA, and SOC2)?

- What is the pricing model?

Most AI SaaS products today follow one of these models:

| Model | Description |

| Usage-Based | Pay per API call, token, or workflow |

| Subscription | Monthly SaaS pricing tiers |

| Seat-Based | Pricing per user |

| Hybrid | Subscription + AI usage charges |

Your pricing model directly shapes a ton of technical decisions later.

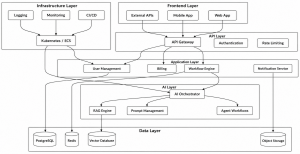

3. Design the Core AI Architecture

A modern AI SaaS platform typically comprises multiple layers.

Recommended Architecture

This modular architecture supports:

- Independent scaling

- Easier deployments

- Better Observability

- AI provider abstraction

- Future extensibility

Modern enterprise AI platforms increasingly use modular cloud-native platforms similar to those used in scalable SaaS and workflow systems.

4. Choose the Right AI Stack

Large Language Models (LLMs)

For LLMs, you have three usual choices:

| Option | Best For |

| OpenAI | Fastest MVP development or prototypes |

| Azure OpenAI | Enterprise compliance |

| Open-source LLMs | Full control and cost optimization |

Most start-ups go with hosted APIs because:

- You get faster launches

- Less hassle

- Lower infrastructure complexity

Common providers:

- OpenAI GPT-4

- Claude

- Gemini

- Llama-based models

AI Frameworks

Useful orchestration frameworks include:

| Framework | Purpose |

| LangChain | Workflow orchestration |

| LlamaIndex | RAG pipelines |

| Semantic Kernel | Enterprise orchestration |

| Haystack | Search and retrieval |

| CrewAI | Multi-agent workflows |

AI orchestration patterns such as RAG pipelines, prompt workflows, and autonomous agents are now widely used in enterprise AI systems.

Vector Databases

Scalable Vector databases are essential for features like search recommendations, document intelligence and AI memory.

Popular choices:

- Pinecone

- Weaviate

- Qdrant

- pgvector

- OpenSearch

Use cases:

- Document search

- Similarity matching

- AI memory

- Recommendation systems

- Knowledge retrieval

5. Build the MVP Fast — But Correctly

The first version should focus on validating:

- Test user value

- Workflow usability

- AI accuracy

- Response speed

- Cost feasibility

Start small initially. Avoid over-engineering.

Recommended MVP Stack

| Layer | Suggested Stack |

| Frontend | React / Next.js |

| Backend | Node.js / NestJS / FastAPI |

| Database | PostgreSQL |

| Vector DB | pgvector or Pinecone |

| Authentication | Auth0 / Clerk / Cognito |

| File Storage | AWS S3 |

| AI APIs | OpenAI / Azure OpenAI |

| Queue | BullMQ / RabbitMQ |

| Deployment | AWS ECS / Kubernetes |

This stack enables rapid iteration while scaling when you need it to.

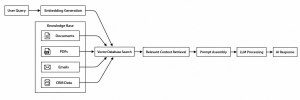

6. Implement Retrieval-Augmented Generation (RAG)

If your enterprise does anything with business-specific knowledge, it eventually requires RAG.

Why?

Because LLMs alone are not enough for business-specific intelligence.

RAG Workflow Diagram

RAG allows your AI system to:

- Real business data

- Improve response accuracy

- Way fewer hallucinations

- Better Context

RAG architectures are now standard for document analysis, enterprise search, and AI assistant platforms.

7. Build an AI Orchestration Layer

Do not call LLM APIs straight from your frontend applications.

Instead, create an orchestration layer which is responsible for:

- Prompt management

- Swaps out providers

- AI workflow execution

- Picking the right model

- Validate responses

- Retry handling

- Managing context

- Add guardrails

- Logging and Observability

AI Orchestration Workflow

This layer becomes the backend “brain” of the platform.

A mature orchestration layer also enables switching between models later without rewriting business logic.

8. Design for Multi-Tenancy from Day One

Most AI SaaS products are multi-tenant, which means you have to consider isolation and access for each customer.

Tenant Isolation

Ensure:

- Data segregation

- Role-based access

- Embedding prompts per tenant

- Tenant-specific vector search

Typical approaches:

| Strategy | Use Case |

| Shared DB + Tenant ID | Early-stage SaaS |

| Schema-per-tenant | Mid-size growth |

| Database-per-tenant | Enterprise-grade isolation |

Multi-Tenant SaaS Architecture

Enterprise SaaS systems increasingly use strict tenant isolation and role-based access controls that help you grow from a handful of customers to big enterprise contracts.

9. Bring in Workflow Automation

AI becomes a powerful tool when it is connected with real-life business processes.

Examples:

- Email automating

- Document parsing

- CRM updates

- Ticket routing

- Automated approvals

- Notification systems

- Data enrichment

Event-Driven AI Workflow

This event-driven pattern makes everything more reliable and scales much more easily.

Workflow automation and event-driven AI pipelines are increasingly common in enterprise automation systems.

10. Engineer for AI Cost Optimisation

AI systems can become extremely expensive if poorly designed.

Key Optimisation Techniques

Prompt Optimization

- Reduce unused tokens

- Keep prompts tight

- Cache prompts

Response Caching

Cache:

- Embeddings

- AI outputs

- Frequent queries

Model Routing

Use:

- Small models for simple stuff

- Large models only when necessary

Batch Processing

Process background jobs asynchronously where possible.

Streaming Responses

Improve perceived performance while reducing timeout failures.

AI Cost Optimisation Flow

These moves cut costs and boost performance.

11. Build Observability into the Platform

AI debugging is much harder than traditional debugging.

You need visibility into:

- Inside prompts

- Model latency

- Count tokens

- Failure rates

- Catch hallucinations

- Measure Vector search

- Spot workflow failures

- Monitor user behaviour

AI Observability Architecture

Recommended Monitoring Stack

| Area | Tools |

| Logs | ELK / OpenSearch |

| Metrics | Prometheus |

| Dashboards | Grafana |

| Tracing | OpenTelemetry |

| AI Analytics | LangSmith / Helicone |

Production AI systems require deep operational visibility, logging, and monitoring for reliability and governance. If you skip this step, your ops team flies blind.

12. Secure the AI Platform Properly

AI Systems are highly attractive targets.

Critical Areas

API Security

- OAuth2

- JWT authentication

- Rate limiting

- API gateways

AI-Specific Risks

- Prompt injection

- Data leakage

- Unauthorized retrieval

- Jailbreak attempts

Data Security

- Encrypt everything at rest and in transit.

- Secure file uploads

- Signed URLs

Compliance

Depending on the industry:

- GDPR

- SOC2

- HIPAA

- ISO27001

AI Security Architecture

Build proper and secure access control, audit logging, and compliance-oriented architecture from the beginning.

13. Implement CI/CD and DevOps Early

AI SaaS products deploy rapidly. Don’t deploy code by hand unless you will bottleneck your own growth.

DevOps & CI/CD Pipeline

Infrastructure recommendations:

- Docker

- Kubernetes / ECS

- Terraform

- GitHub Actions

- AWS CloudWatch

- Prometheus

Modern SaaS engineering increasingly relies on automated CI/CD pipelines, Infrastructure-as-Code, and containerised deployments.

14. Set up AI Evaluation and Feedback Loops

AI products improve only through monitoring what works.

Monitor:

- User satisfaction

- Prompt effectiveness

- Retrieval quality

- Hallucination frequency

- Response usefulness

- Whether workflows succeed or not.

AI Evaluation Workflow

Add Human-in-the-Loop Workflows

Important for:

- Legal AI

- Healthcare AI

- Financial systems

- Enterprise workflows

AI should augment operators, not just replace people blindly.

15. Scale the Platform Gradually

A scalable AI SaaS roadmap typically looks like this:

| Stage | Focus |

| MVP | Validate workflow |

| Early Growth | Improve reliability |

| Mid-Scale | Optimize costs |

| Enterprise | Compliance + governance |

| Platform Scale | Multi-region + advanced AI ops |

SaaS Scaling Journey

Don’t build micro services or extra complexity until you need it.

16. Common Mistakes When Building AI SaaS Products

Over-Reliance on LLMs

LLMs should be one tool — not the entire solution.

Ignoring Workflow Design

AI without workflow brings little value.

Poor Prompt Versioning

Prompt management becomes a major operational issue later.

No Cost Controls

Token overrun kills margins quickly.

Weak Data Strategy

AI quality depends heavily on data quality.

Skipping Observability

No Observability, AI failures go unnoticed and hurt users.

17. Recommended End-to-End AI SaaS Architecture

Final Thoughts

Building an AI SaaS product today is bigger than integrating GPT APIs into your product.

Successful AI platforms combine:

- Strong workflow design

- Scalable SaaS architecture

- Smart AI orchestration

- Bulletproof Secure multi-tenant systems

- Real Observability

- Cost-aware operations

- Continuous AI evaluation

The winning AI SaaS companies will not simply “add AI features. Build intelligent operational systems that really help customers do their work.

The future is bright for AI-native platforms if you design them to scale, stay reliable, and automate at their core.